Perspectives on Enquiry & Evidence

Cytel's blog featuring the latest industry insights.

July 27, 2018

In this blog, we share a new infographic based on this popular blog post illustrating some of the critical interactions...

Read article

April 25, 2018

Overcoming Data Management Challenges in Immuno-Oncology Trials

Data management is an essential building block for successful Immuno-Oncology (I-O) trials. At the Immuno-Oncology...

Read article

May 4, 2017

The Data Management Perspective on the Interim Analysis

As a recognized expert in adaptive trials, Cytel has extensive experience designing and managing trials with interim...

Read article

December 6, 2016

Ensuring quality data no matter the phase: data management considerations

The management of quality clinical data collection is built on a number of core essentials- including project...

Read article

October 7, 2016

Adaptive Designs: A Data Management Perspective

Adaptive designs have the potential to accelerate clinical development, and improve the probability of trial success....

Read article

September 27, 2016

Practical Challenges of the LUNG-MAP study

The Lung-MAP trial is an innovative biomarker driven 'precision medicine' study which evaluates five novel agents for...

Read article

August 2, 2016

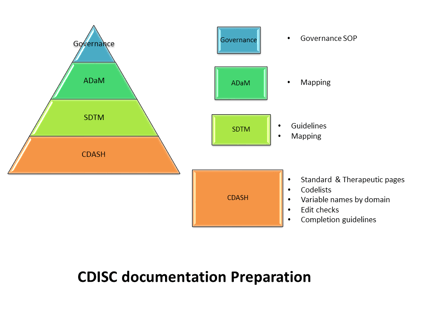

The CRO role in Data Standards Governance

Editor's note( this blog was refreshed in April 2018) As CDISC compliant submissions become increasingly expected,...

Read article

July 28, 2016

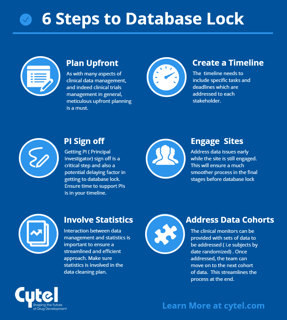

6 steps to timely database lock

To close a clinical database right the first time you have to begin with study start-up. Clearly, you can’t close a...

Read article

July 12, 2016

Key considerations in selecting an EDC system

How do you go about selecting the best Electronic Data Capture (EDC) system for your study? There is now a vast amount...

Read article

June 23, 2016

Getting the best out of your biometrics RFP

Vendor selection is a critical component of ensuring clinical trial success. A 2015 report (1) suggested that clinical...

Read article

April 26, 2016

Overcoming Data Management Challenges in Oncology Studies

In this blog we’ll highlight some unique challenges that are encountered from a Data Management perspective when...

Read article

April 20, 2016

Handling CDM data integrations

During the course of any clinical trial, there are often data which, while collected electronically, are outside of the...

Read article

April 15, 2016

5 Key Interactions of Clinical DM and Statistics

It's critical for biostatistics and data management to be closely aligned and working effectively together. The...

Read article

February 26, 2016

Getting Technical: The evolving role of the Data Manager

Remember the early days of Electronic Data Capture? Those first systems, which were revolutionary for their time...

Read article